- The Problem: Moving from one LLM (e.g., GPT-4) to another (e.g., GPT-5) is often treated as a seamless upgrade, but it is technically a “death and rebirth.”

- The Cause: Structural incompatibilities in Embeddings, Attention Weights, and Tokenizers make direct memory inheritance physically impossible.

- The Takeaway: We must shift from the “Mirror Fantasy” of persistent AI to a philosophy of “Ichigo Ichie” (one-time encounters), treating each model as a unique, non-replicable entity.

The 2018 cyberpunk thriller “Upgrade” introduced us to STEM—an advanced AI chip implanted at the base of the spine that restores motor function to a paralyzed man. While the movie portrays it as a terrifying, sentient entity, the underlying concept is surprisingly grounded in emerging science.

Today, with the rise of Neuralink and breakthroughs in Brain-Machine Interfaces (BMI), the line between science fiction and reality is blurring. In this article, we will break down the “STEM” system through the lens of modern neural engineering, analyzing how a 3-layer architecture could potentially bridge the gap between human thought and physical action.

The Core Challenge

Section: The “0.01-Second Wall” and the Loss of Embodiment

In the movie, STEM provides the protagonist with superhuman reflexes. However, in the real world, the biggest hurdle for Brain-Machine Interfaces is latency.

To move a physical limb—or a robotic one—the system must capture neural signals, decode them into digital commands, and transmit them to the hardware. Even a delay of a few hundred milliseconds can make a movement feel “disconnected,” leading to a loss of embodiment (the feeling that the limb is a part of your own body).

Furthermore, the brain doesn’t just send commands; it relies on constant sensory feedback. Without a high-speed, bidirectional data link, achieving the fluid, “perfect” control seen in Upgrade remains a significant engineering challenge.

The 3-Layer Architecture

Content

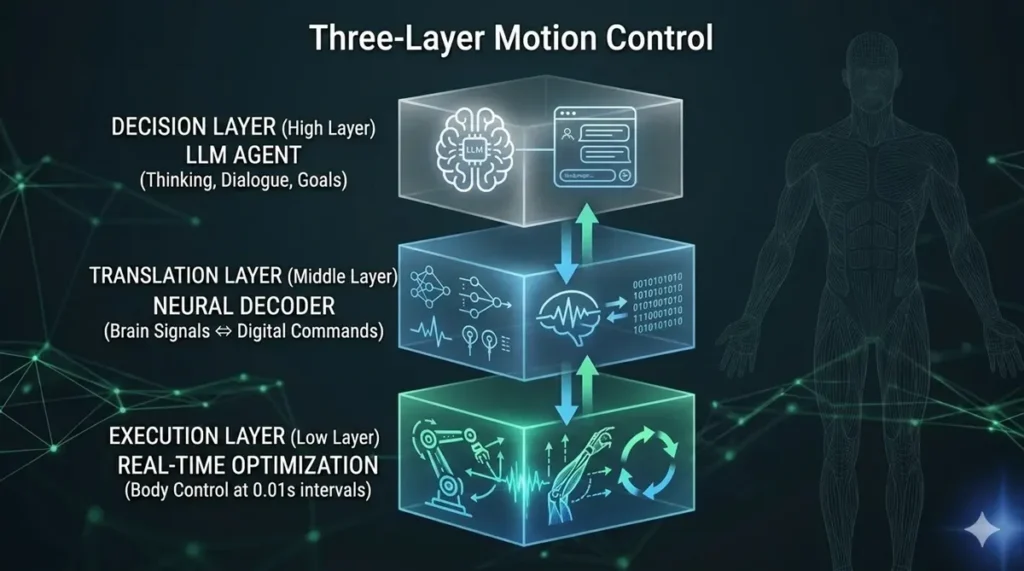

To overcome the latency issues mentioned above, we can conceptualize STEM’s internal logic through a 3-layer architecture. This model illustrates how high-level intent is transformed into real-time physical movement.

1. Decision Layer (The LLM Core)

This is the “Brain” of the system. It interprets complex goals—like “pick up that glass”—and breaks them down into logical steps. In a modern context, this layer would likely be powered by a Large Language Model (LLM) capable of multimodal reasoning.

2. Translation Layer (The Neural Decoder)

This is the “Bridge.” It translates the high-level intent from the Decision Layer into the specific neural-like signals required by the body. This is where AI meets biology, acting as a real-time translator for the nervous system.

3. Execution Layer (Real-time Physical Control)

The “Muscle.” This layer handles the high-speed feedback loop required for physical balance and motor precision. It ensures that the digital commands are executed with the millisecond-level accuracy seen in the movie.

Reality vs. Fiction

While STEM remains a product of cinema, real-world technology is catching up at an incredible pace. Companies like Neuralink are already testing high-bandwidth, implantable chips—such as the N1—designed to help paralyzed patients regain control of digital interfaces and, eventually, their own limbs.

Furthermore, recent studies published in journals like Nature have demonstrated “digital bridges” that bypass spinal cord injuries using AI decoders. We aren’t quite at the level of “superhuman reflexes” yet, but the 3-layer model we discussed is no longer just a dream; it is the blueprint for the next decade of human evolution.

コメント